Stochastic gradient descent (often abbreviated SGD) is an iterative method for optimizing an objective function with suitable smoothness properties (e...

50 KB (6,600 words) - 10:13, 31 May 2024

of gradient descent, stochastic gradient descent, serves as the most basic algorithm used for training most deep networks today. Gradient descent is based...

36 KB (5,280 words) - 10:08, 19 May 2024

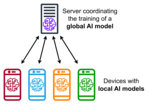

Federated learning (redirect from Federated stochastic gradient descent)

of stochastic gradient descent, where gradients are computed on a random subset of the total dataset and then used to make one step of the gradient descent...

51 KB (5,963 words) - 19:17, 13 May 2024

Online machine learning (redirect from Incremental stochastic gradient descent)

out-of-core versions of machine learning algorithms, for example, stochastic gradient descent. When combined with backpropagation, this is currently the de...

25 KB (4,740 words) - 03:53, 2 May 2024

Backpropagation (section Second-order gradient descent)

can be derived through dynamic programming. Gradient descent, or variants such as stochastic gradient descent, are commonly used. Strictly the term backpropagation...

54 KB (7,493 words) - 11:15, 30 May 2024

Stochastic gradient Langevin dynamics (SGLD) is an optimization and sampling technique composed of characteristics from Stochastic gradient descent, a...

9 KB (1,370 words) - 22:11, 1 March 2024

Gradient descent Stochastic gradient descent Wolfe conditions Absil, P. A.; Mahony, R.; Andrews, B. (2005). "Convergence of the iterates of Descent methods...

29 KB (4,566 words) - 06:41, 11 March 2024

descent Stochastic gradient descent Coordinate descent Frank–Wolfe algorithm Landweber iteration Random coordinate descent Conjugate gradient method Derivation...

1 KB (109 words) - 05:36, 17 April 2022

for all nodes in the tree. Typically, stochastic gradient descent (SGD) is used to train the network. The gradient is computed using backpropagation through...

9 KB (954 words) - 19:30, 25 December 2022

being stuck at local minima. One can also apply a widespread stochastic gradient descent method with iterative projection to solve this problem. The idea...

23 KB (3,496 words) - 08:22, 5 March 2024